Voice is the new interface that will soon surround us in many places and in many ways. Voice content for Amazon Echo, Google Home, and Samsung devices is being developed by brands large and small.

We’re building voice-activated content strategies for our clients here at Convince & Convert — helping them take advantage of this fast-growing consumer interaction opportunity (for more on what we do in voice content, see Why The Time is Now for Voice-Activated Content).

We’re building voice-activated content strategies for our clients here at Convince & Convert — helping them take advantage of this fast-growing consumer interaction opportunity (for more on what we do in voice content, see Why The Time is Now for Voice-Activated Content).

I recently attend Voice Summit 2019, reported to be the largest-ever industry gathering of voice content strategists, developers, technologists, vendors and hardware platforms.

Here are the top 8 voice content trends that I synthesized during my time at the event and via our work with clients on voice apps.

The Best Voice Content Starts with User Needs

Similar to the early day of mobile apps, and even websites, there is a tendency among strategists and developers to think: “Let’s make a voice app!” Instead, the better approach is to carefully consider and research how consumers interact with the brand, what they actually need to know from that brand, and whether/if voice content is a suitable way to deliver. After all, there is no law that says you MUST have voice-activated content. Is it genuinely a Youtility? If so, build it. If not, don’t!

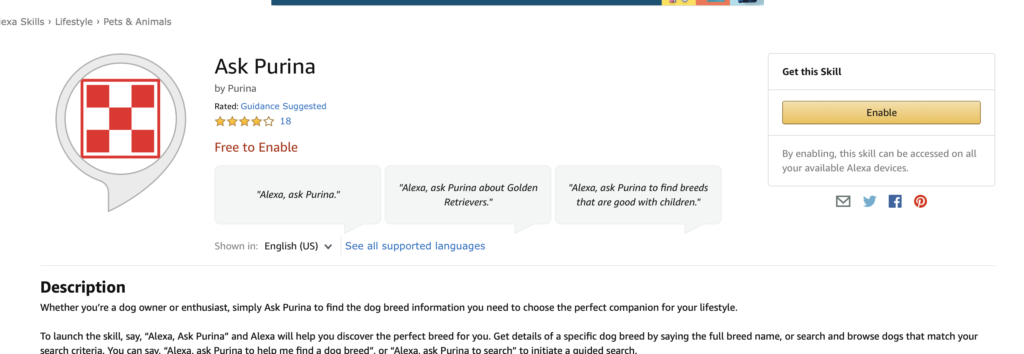

For example, Purina’s “Ask Purina” Alexa skill was born from consumers’ need to understand how different dog breeds behave, and which breed might be most appropriate as a new pet. They considered including audio promotions for dog food purchases but discarded that notion after realizing it would clutter the information asset, according to representatives from Mobiquity, the firm that developed the skill.

Convergence of Voice and Chat

The most effective voice applications today are typically news, information-retrieval Q&As or games. On the “brand Q&A” front, as with the Purina example mentioned above, the interaction flow of these apps is very similar to how consumers use chatbots.

In fact, the Ask Purina dog breed information Alexa skill would work quite well as a chatbot on a website and/or via Facebook Messenger or WhatsApp.

KLM Airlines saw this convergence too but came at it from the opposite direction. They took their very successful (and oft-used) messaging app and ported it to an Alexa voice skill for Amazon Echo devices.

Whether you’re going from voice to chat, or from chat to voice, it’s true that many information-based use cases will work similarly in both scenarios.

This is just one of the reasons we are happy to partner with Voicify. Voicify is a voice content management system that also allows Alexa Skills and Google Apps to instantly be ported to a chatbot with very little additional development work.

Convergence of Voice and Visuals

As was mentioned on stage at the Voice Summit 19 event, interfaces that have historically been visuals first (like your laptop or vehicle display) are now adding voice. I use Siri on my MacBook every day. Conversely, interfaces that have historically been voice first (like Amazon Echo) are now including visuals.

Many of the newly purchased smart speakers include screens, and the Amazon Echo Show and Google Home Hub devices are routinely priced below $100.

Many of the newly purchased smart speakers include screens, and the Amazon Echo Show and Google Home Hub devices are routinely priced below $100.

This has a few ramifications.

First, it geometrically increases the complexity of voice app development.

Second, it opens up much additional utility. The Purina app would be more useful if you could see pictures of dog breeds on a smart speaker with a screen. Not to mention the fact that voice is faster as an input but slower as an output. According to Tobias Dengel of Willowtree, we type 40 words per minute (wpm) on average, but speak 130. Conversely, we can read 250 wpm, but can only listen to 130. This has a lot of potential to make voice content truly multi-modal and user-friendly if we can speak what we want and read the results.

We type 40 words per minute (wpm) on average, but speak 130. #voice Share on XBut third, if smart speakers become primarily devices with screens, what differentiates them from tablets, small laptops, or large phones?

While I prefer smart speakers with a screen (I’m a Google Home Hub devotee, personally), I’m not sure blurring the differences between a smart speaker and an iPad is ultimately a win for these devices.

Format Clash Becoming a Problem

During the short history of the smart speakers and voice content epoch, Amazon has been the big boss. Their Echo devices essentially created the category, and that first mover advantage plus their massive promotional power allowed Amazon to run way out in front in the smart speakers world.

More recently, however, Google (and to a far lesser extent, Apple) have jumped into the fray with their own hardware devices, looking to out-Echo the Echo, with varying degrees of success. Recent industry reports suggest that Google’s market share of smart speakers is nearing 25% now, and given their deep pockets and interest in dominating anything search related (plus their ownership of smart home entity Nest), they aren’t going anywhere.

This provides consumers with a growing array of smart speaker choices on the hardware side, but creates a bedeviling and inefficient process for voice content developers. Today, the technology underpinnings of an Amazon Alexa skill and a Google Home app are quite different. Not to mention the brand-new Samsung Bixby voice platform, which is architected almost in reverse of how Amazon/Google do it.

Thus, the voice content world is in the midst of a standards dilemma that is redolent of Betamax vs. VHS, Internet Explorer vs. Netscape, ios vs. Android, and Joe Jonas vs. whatever his brothers’ first names are.

It would be a LOT better if there was a single development path for voice content. But I’m not holding my breath that we’ll see such a thing, which is why voice content management systems like Voicify are critical today. Inside Voicify, when we build voice content, the Voicify technology automatically tweaks and twists the interactions and scripts to work on both Amazon and Google devices, without having to rewrite the voice application. A win, for certain.

Marketing and Rollout is Crucial

As the biggest and longest-running ecosystem, Amazon, of course has the most voice applications approved and running, more than 60,000 in the United States alone. A few dozen new skills are added each day. And consumers’ ability to discover useful new skills is not a highlight of the current Alexa system. It’s essentially the online and/or voice-activated equivalent of walking through a very large library with a staggering variety of books, many of them shitty, and a fourth-rate librarian half-heartedly answering questions between bites of homemade casserole.

Put it this way: if you want people to find and use your voice-activated content, that responsibility falls on YOUR shoulders. Expect NOTHING from Amazon and Google in terms of promotion and discoverability. That way, you won’t be disappointed when that’s exactly what you receive.

When launching voice content, you simply must activate a thorough, multi-modal awareness and trial campaign that takes advantage of some combination of out-of-home, email, social, direct mail, hostage notes, and people dressing up like clowns and standing on street corners. Your mileage may vary.

Today, the capabilities of voice content actually outstrip consumers’ understanding of those capabilities. It’s an interesting inversion. Comcast (one of our favorite clients) spoke on a panel at Voice Summit 19 and reported that its customers uttered some 9 BILLION commands into their voice-activated X1 remote controls in 2018. But the vast majority of those voice commands are for the same small set of requests. They are currently working on new ways to teach customers all the other things the voice remote can do. In your own way, you’ll need to do the same when you roll out your voice-activated content.

Purposefully Limited Functionality

One of my favorite points at Voice Summit 19 came from Martine van der Lee from KLM Airlines who noted that when voice apps have a lot of functionality, working with them becomes more frustrating, not less.

She accurately underscored that voice content with several options (essentially a collection of apps within the umbrella app) requires an IVResque interaction between consumer and device. “Do you want to do this, or this, or this, or this, or this?” It’s phone tree hell but through a smart speaker. Not good.

For now, the best approach is to find a use case that’s worthy and build your voice content app to do just a couple things, exceedingly well. You’re better off having multiple apps or skills than stuffing more options into an existing voice execution. Note that the use of screens in smart speakers (see above) may ameliorate this problem, eventually.

Internal Voice Content Opportunities Abound

While most voice skills and apps have been developed for consumer use, there are many interesting use cases for internally-focused voice-activated content. Especially since app usage can be locked down so that only approved persons/email address have access, the internal communications potential is significant.

For example, an “Ask HR” voice app that handles common questions about payroll, insurance, vacation policies, etc. An “inventory check” voice app that instantly scans current supplies on hand to see if a particular part is in stock. Or a “meeting killer” app where participants in a team each record a short project update, and all updates are batched together as a single audio file. Easy listening, time-efficient, and no conference room needed!

Ethics Are Out Front

There was a lot of talk about ethics at Voice Summit 19. It’s refreshing to see the pioneers in an emerging industry think through some of the societal ramifications of their work from the outset, rather than trying to gerrymander ethical considerations after the train has long since left the station (cough, cough — social media — cough, cough).

The New York Times conducted a thorough subscriber study on the viability of and attitudes toward smart speakers and voice content and found that the overwhelming majority of smart speaker users believe the default voice used by the speakers to be “White” in their inflection and outlook. This, in and of itself, has implications.

To combat this, KLM Airlines recorded the voices of hundreds of employees and built a custom poly-voice language engine that is intended to be as neutral as possible.

Other ethical considerations at this early stage include the ability (or lack thereof) of smart speakers to listen to tonality and respond differently based on perceived empathy needs, etc.

And of course, a big consideration is consumers’ distrust of the listening nature of smart speakers in general. My good friend Tom Webster of Edison Research showcased his data that showed consumer concern about smart speaker privacy increased markedly in the past year.

60% of people are concerned about privacy and the potential of hackers accessing their information via smart speakers. #voice Share on XWhy This Matters

Voice-activated content via smart speakers and other devices is an early-stage, emerging field. Yet, the rapid adoption of these devices suggests that voice will continue to grow as an interaction ecosystem. We’ll keep you informed as we see these voice trends developing and shifting over time. Meanwhile, if we can help you think through your own approach to voice, please let us know.